#46 - Are you still making your own decisions?

Part 2 of 2: The science of decision making and how to stop AI doing it all for you

This is the second article in a two-part series looking at two core competencies you need to invest in to prevent from atrophying in the age of AI. The first covered strategic thinking, as well as why it’s important to maintain your cognitive skills. Read it first then continue here.

I still have the mental scars from some of my earliest Product reviews more than a decade ago. At the time I led a product squad focused on building new products — read: risky, low volume, unproven — as a new line within our portfolio of mature products. Every other Wednesday at the end of the sprint, we would present back to the execs what we had been working on: performance data, customer stories, what we'd learned. As PMs we'd make recommendations on what to do next. The problem was, my slot was at the end of the day, and I could see the exec team flagging after reviewing the work of 8 or more teams. I now know that the end of the day is the worst time to evaluate big bets, because cognitive fatigue compromises the quality of decisions, in particular leading to risk polarisation: decisions become either ultra conservative or impulsive, with no middle ground (Jeon, 2026).

Decision fatigue is just one example of how our cognitive limits shape the quality of our choices. Which brings us to the core competencies framework I updated last week: the other side of your relationship with context is decision making. And there’s a lot of cognitive science around this. You may be familiar with the seminal work of Daniel Kahneman and Amos Tversky first published in 1979, Prospect theory: An analysis of decision under risk. They later won the Nobel Prize for Economics in 2002, and their thesis was turned into the popular psychology book Thinking, Fast and Slow. Here Kahneman dispelled the myth that we act as rational beings when it comes to making decisions (Homo Economicus — economic man), instead showing how we are subjected to emotions, biases and heuristics that shortcut the majority of our thinking, to make it less cognitively intensive. And using AI may enable us to take even more of those shortcuts.

The brain is a greedy organ. On average it weighs just 1.2-1.4kg (~2% of body mass), yet consumes 20% of our total metabolic energy. It requires a constant, uninterrupted supply of glucose and oxygen, and essential nutrients including omega-3 fatty acids, vitamins and antioxidants to maintain cell structure and neurotransmitters (chemicals such as serotonin, dopamine, glutamate, and norepinephrine). Without these, the brain structures degrade, severely affecting cognition (e.g. stroke), and ultimately life. For this reason our brains evolved shortcuts to minimise the resource-intensive slow thinking (deliberate, effortful, logical, calculating, conscious) — saving that for critical decisions only. Instead, the majority of our decisions are made fast — through automatic, frequent, emotional, stereotypic, and unconscious thinking, which conserves brain energy.

What is decision making?

Decision making is the ability to reach a sound judgement and commit to it, under conditions of uncertainty and incomplete information. Decisions can feel easier when there is a lot of information available, because of the perceived reduction in uncertainty. Daniel Ellsberg in 1961 defined this paradox as ambiguity aversion: the tendency to prefer known risks over unknown ones, even when the unknown option might objectively be better. However, you can never have perfect information, so waiting for certainty is itself a decision — and usually the wrong one.

Let’s look at the skills that make up this core competency:

Using data: Identify, source, evaluate, and apply relevant evidence to inform and strengthen decision making.

Sense making: Organise ambiguous, incomplete, or conflicting information into a coherent picture that enables action.

Inquiry: Question the framing of a problem before accepting it, generating better questions rather than better answers to the wrong question.

Critical thinking: Evaluate information and arguments objectively, identifying assumptions, inconsistencies, and logical gaps before reaching a conclusion.

Risk evaluation: Assess the probability and consequence of possible outcomes, make a judgement call, and commit without waiting for certainty.

Conviction: Back your own judgement and commit to a course of action decisively, even under conditions of incomplete information and potential opposition.

How these skills develop

What shape is Kiki? And what shape is Bouba? Developmental psychologists have tested this extensively, and shown that Kiki is sharp and spiky, whilst Bouba is soft and round. The brain innately maps correspondences across sensory domains, such as sound to shape, colour to temperature, pitch to size — these are a feature of the brain’s architecture rather than learned. And from birth, babies use these correspondences to show preferences towards certain stimuli, such as turning towards food sources. In one sense, some of our decision making may be innate and biologically determined.

Meaningful conscious decision making starts around age 3, with the development of preferences and simple reasoning. By adolescence, the capacity is largely functional, but still heavily influenced by emotional processing over rational deliberation — the prefrontal cortex isn't yet fully regulating the limbic system, which is why teenagers are more impulsive and reward-seeking. Adult-level decision making, integrating emotion and reasoning effectively, develops through early adulthood and continues to refine with experience. As an adult, it is estimated that you are making around 35,000 decisions each day.

What I find particularly interesting is the role of emotions in decision making. Antonio Damasio was a neuroscientist who studied patients with damage to the ventromedial prefrontal cortex, the part of the brain responsible for processing emotions. He learned that whilst they retained their reasoning ability and intelligence, they became catastrophically bad at making decisions in real life, despite performing normally on logical tests.

This led to his somatic marker hypothesis: the idea that emotions — experienced as physical sensations in the body — act as rapid signals that help us evaluate options before conscious reasoning kicks in. Far from being a corrupting influence on rational thought, emotion is a necessary component of good decision making. Without it, you can reason perfectly well but can't prioritise or commit.

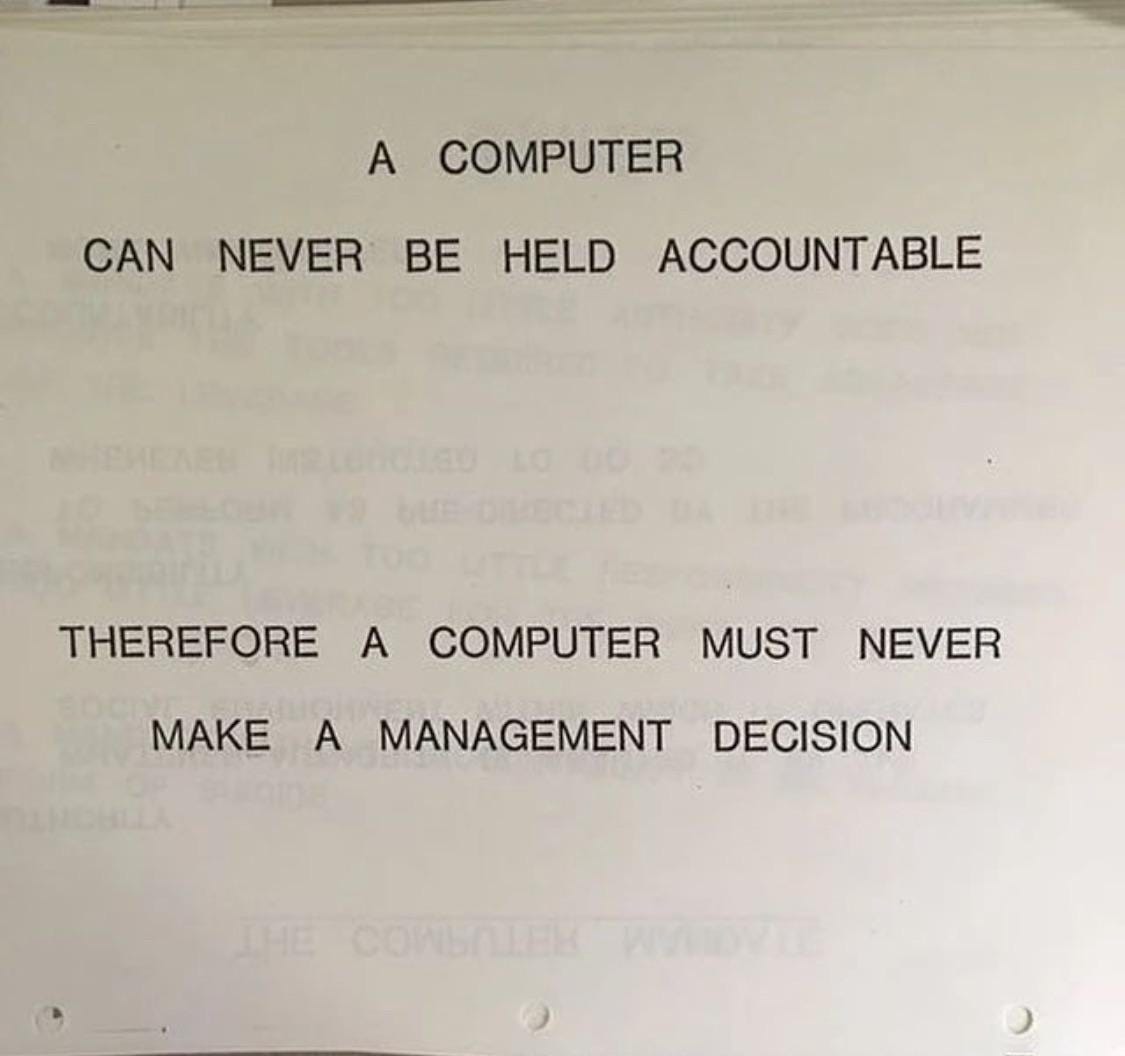

Taken together, what this shows us is that the development of decision making as a competency depends upon three factors: practice, emotions and feedback loops. Notice how these three are inaccessible to AI: it is not embodied so doesn’t experience emotions, it doesn’t learn from your interactions with it, and it doesn’t do anything beyond compute probabilistic answers. It doesn’t have consequences and accountability in the real world.

But people are real, and can be held accountable. So how do you retain and develop your decision making skills, when the temptation is to turn to AI?

Exercises to develop your decision making

Here are three exercises you can use yourself or share with your team to develop better decision making.

Exercise 1: The data audit (using data)

For a decision you're currently facing, list every piece of evidence you're drawing on. Then ask yourself (checking your biases):

Is this real data or is it an assumption? (availability bias)

How current is it? (recency bias)

Who collected it and why? (authority bias)

How much of what I think I know is actually evidence versus pattern recognition from past experience? (confirmation bias)

Challenge yourself to come up with alternative evidence — what else could be going on here that you haven’t considered? How might your manager, partner, or child see this data differently?

Exercise 2: Socratic questioning

Before accepting a problem at face value, interrogate it. Socratic questioning, a technique used in coaching and therapy, works by systematically challenging assumptions until you reach something more solid underneath. Apply it to a decision you’re currently facing:

Clarify the question: What exactly are you deciding? Can you state it in one sentence?

Challenge the assumption: Why are you framing it this way? What are you taking for granted?

Examine the evidence: What do you actually know, versus what are you assuming?

Consider alternative perspectives: How would someone who disagrees frame this problem?

Question the question: Is this the right problem to be solving? What would you be deciding if this constraint didn’t exist?

The goal isn’t to reach an answer, but to arrive at a better question.

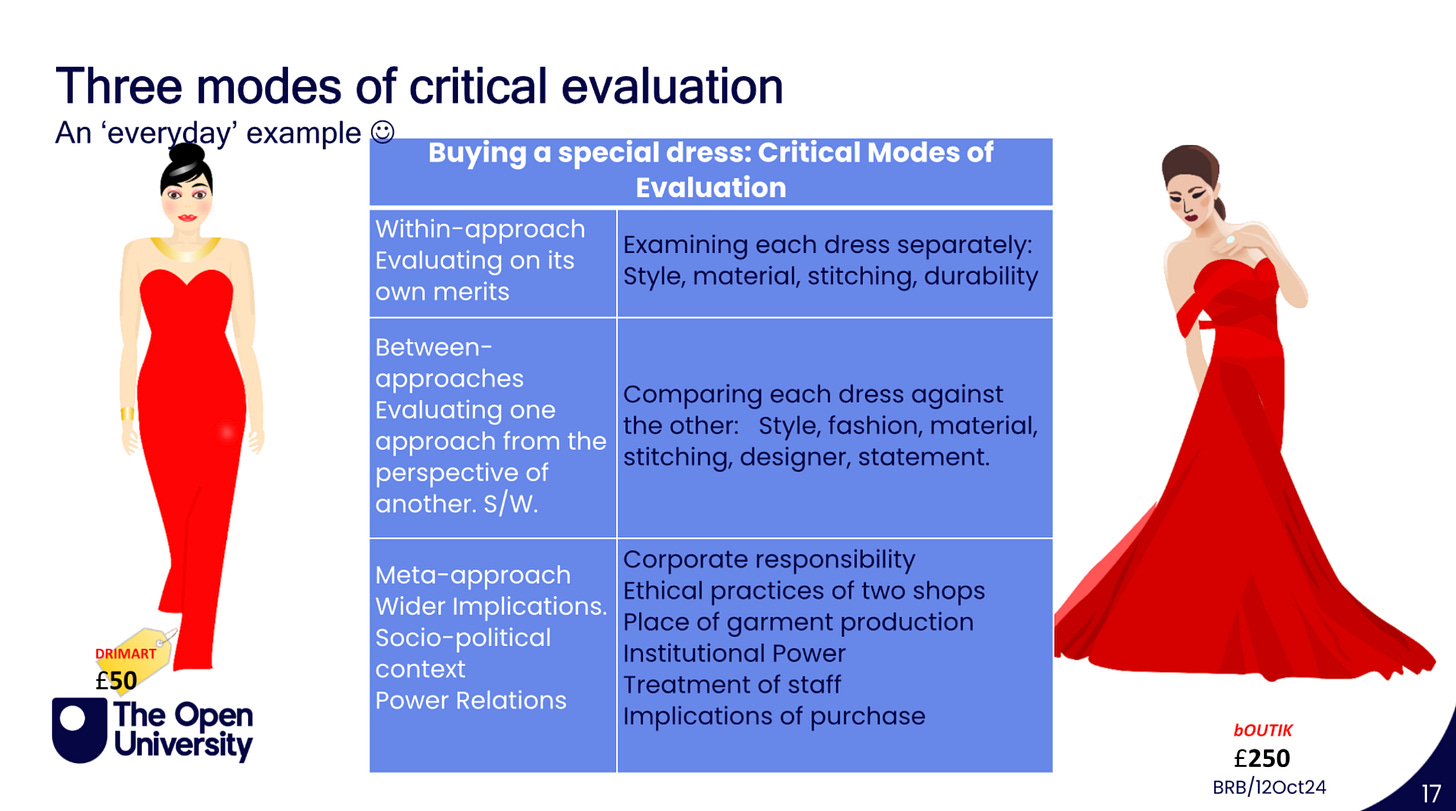

Exercise 3: Three modes of critical evaluation

This framework comes from my Open University studies, drawing on the work of Johanna Motzkau. When facing a significant decision, run it through three modes of evaluation:

Within approach: Evaluate the option on its own merits. What are its aims and assumptions? Does it do what it claims to do?

Between approaches: Compare it against the alternatives. What are the relative strengths and weaknesses of each option?

Meta approach: Step back entirely. What are the wider implications? What power relations or taken-for-granted assumptions are shaping how the problem has been framed in the first place?

We learnt this through the example of the red dress — see the slide below from one of my tutorials for more.

Bonus exercise: the rule of 3

Some decisions are easy to make, others less so. If you’ve been agonising over one for a while, use this technique to break through. Set yourself a timer for 3 minutes, and evaluate the decision against the following 3 criteria: cost, complexity, confidence. At the end of 3 minutes, make the decision (any decision) — and fully commit to it, whatever happens.

AI-augmented decision making

The human-AI sandwich I shared last time is also relevant here — with two important caveats. You’re going to get the most value out of this process when you don’t outsource inquiry (as it’s essential to sense making) and conviction (the moment of making the decision and believing in it). Instead, use AI to support you with the inputs: gathering and analysing data, and evaluating options and risk.

You can also use AI to check your biases, specifically prompting it to challenge itself and you on the most common ones:

Confirmation bias — AI will find evidence for whatever position you’ve already taken if you prompt it that way. But prompted to play devil’s advocate, it can surface contradictory evidence you wouldn’t have gone looking for yourself.

Anchoring bias — the first piece of information you encounter shapes all subsequent judgement. If you feed AI your initial framing, it will tend to work within that anchor rather than challenge it. You have to explicitly ask it to.

Availability bias — you overweight recent or memorable examples. AI has access to a much broader range of cases and can surface less obvious precedents.

Dunning-Kruger — the less you know about a domain, the more confident you tend to be. AI can expose gaps in your knowledge quickly, though it can also give you false confidence by generating plausible-sounding content in areas where you lack the expertise to evaluate it.

In-group bias — you favour solutions and people that feel familiar. AI has no social allegiances and can evaluate options without that filter, though it carries its own training biases.

Sunk cost bias — you continue investing in a failing course of action because of what you’ve already committed. AI has no emotional attachment to past decisions and can evaluate the current situation on its merits.

Here’s a prompt you can use with any decision you need to make:

I need to make a decision about [describe the decision]. Here is my current thinking: [describe your position and reasoning].

Before responding, I want you to challenge my thinking rather than validate it. Specifically:

What assumptions am I making that I haven’t questioned?

What evidence contradicts my current position?

What are the strongest arguments for the options I’m not currently favouring?

Where might I be overweighting recent or memorable examples over broader evidence?

What would someone who fundamentally disagrees with my framing say?

Are there sunk costs or past commitments influencing my thinking that shouldn’t factor into this decision?

Do not tell me what to decide. Help me think more clearly about what I might be missing.

Then consider its answer as another data point in your decision making.

One final note: much of the public discourse critically evaluating AI centres the within and between approaches — is it accurate, is it better than the alternative — with criticism directed at anyone who raises the meta considerations, labelling them a 'doomer'. But it is right and fair to be concerned about the wider impacts of AI, particularly how the externalities are suffered mostly by communities that don't have the power to push back. No technology is without its costs, as well as its benefits. And we have a collective decision to make about how we handle this. If you're building a product strategy without considering the environmental and social impacts of your decisions — the third order effects — you're only doing half the job.

The next time you defer a decision to AI, ask yourself: am I augmenting my thinking, or avoiding it?

If you found this useful, here’s how I can help you more:

Develop your career as a leader with me through leadership coaching.

Inspire your audience to change their beliefs through In-company keynotes and workshops.

Join the waitlist for my new book, Beliefocracy: How our beliefs govern us.

Commission a piece of novel research.