#38 - Manufacturing consent

On predictions, prescriptions, and the futures we're told are inevitable

In 1966, Star Trek imagined aliens who could bend reality through sheer mental force. In 1981, Apple engineers realised their leader had the same power.

In ‘The Menagerie’, an episode from season 1 of Star Trek: The Original Series, we encounter a telepathic alien species called the Talosians. Survivors of a nuclear holocaust that rendered their planet completely uninhabitable, they lived their lives underground. They developed their mental powers to create illusions, and found this to be so addictive, they started abducting space travellers to use as a source of inspiration.

Fifteen years later, this inspired Bud Tribble at Apple to coin the term reality distortion field to describe Steve Jobs’ effect upon engineers working on the Macintosh project. It was a potent blend of charisma, drive and hyperbole that could bend truth and empower teams to believe they could ship what looked technically unfeasible against impossible deadlines.

This was a unique form of power: Jobs had a vision for the future, and willed it into existence through sheer force of mind.

But what this shows us is that our future may not be out of our control — a particular outcome can be chosen, if not designed. Today’s tech leaders learned from Jobs and are now wielding their own reality distortion fields around AI — a tiny minority deciding everyone’s future.

The 1% problem

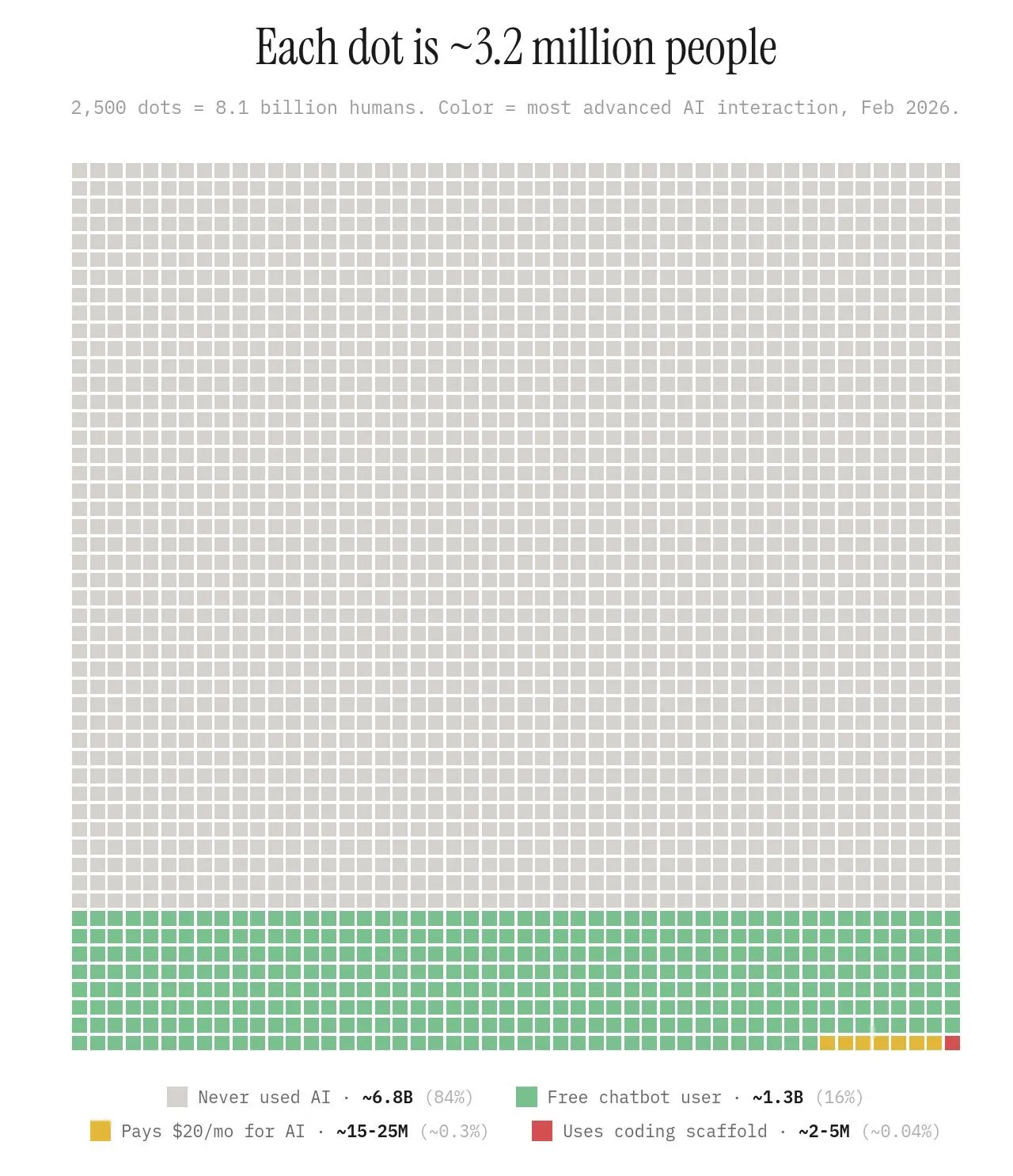

Let’s zoom out a bit now. A graph has been doing the rounds on Linkedin that shows out of the global population how many of us are using AI and to what extent.

If you’re regularly using LLMs then you are amongst 16% of the global population. If you pay for it, you’re amongst 0.3%. And if you are coding with it, you’re in just 0.04% of the world’s total population.

This presents several problems.

First, that this minority isn’t just small but also homogenous. Those controlling the AI tech are predominantly Western, white male tech leaders. They’re building systems trained on Western data, and then exporting them globally without consideration or adaptation for local variance and cultural diversity.

Side note: Before COVID, I worked for a startup developing biometric technology for humanitarian programmes across the Global South: deworming in Ethiopia, health systems strengthening in Tanzania, maternal health in Bangladesh. The gap between what tech companies thought these communities needed and what they actually needed was staggering. I watched philanthropic organisations run projects that looked transformative on paper but functioned as white saviourism in practice. Colonialism is alive and well, and tech companies are its latest missionaries.

Second, this small homogenous group is wielding its own reality distortion field. Every press release, every conference keynote, every soundbite serves one purpose: manufacturing consent for their preferred future. When Altman says AI will make professions obsolete, he's not warning you about a risk; he’s announcing his strategy. We mistake his statements for predictions, treating them as uncertain forecasts about what might happen, when they’re actually commitments. OpenAI’s plans are backed by billions in capital, and are positioned as inevitable so we’ll stop resisting them.

The consequences of these plans aren't abstract. They're already discernible against the metrics we use to track human development. Global poverty is assessed across seven key dimensions: access to water, housing, food, employment, education, gender equity, and religious freedom. Reports from Pope Francis Global Poverty Index, the World Bank and Statista now show that progress in reducing poverty has stalled, and in some cases slipped behind. AI has the potential to not only halt progress, but to accelerate regression in tackling this issue and move more countries below the poverty line.

Take Chile. One of South America's most stable economies, it’s now facing 8% unemployment and high inflation. This is where Amazon, Google and Microsoft chose to build their data centres. The infrastructure demands strain local water and electricity supplies while automation threatens jobs. AI isn't lifting countries out of poverty so much as actively pushing them towards it.

The gap between those who have and those who have not will continue to grow. The 0.3% who can afford premium AI access may get smarter, faster, and more capable. The rest of the world will fall further behind, experiencing job displacement and economic disruption. And AI will accelerate this faster than any previous technological revolution.

Is that the future we all want?

When skills atrophy

Within CPO Connect this week, a member invited us to add our views to this debate: “As AI takes on more of the cognitive and administrative load for people leaders, which skills do you think will atrophy fastest — and how are you actively keeping them sharp?”

This prompted some lively discussion amongst the rest of us, where we discussed the necessity of skills such as judgement, hard conversation stamina, intuition, empathy, grammar and vocabulary. My own contribution centred around the loss of comprehension: literally being able to make sense of what you are reading or listening to.

I have personal experience of this already. I regularly review academic papers on leadership and space psychology, and some of these papers are very dense. Sometimes the abstract doesn’t give me enough to decide if I should read more, so I would ask Claude to summarise. Occasionally it got this wrong, hallucinating the subject matter or missing the nuance of the study. And more often than I would like, catastrophically so — like inventing entirely different study variables and results. So I am still reading the articles to validate their utility, but I can imagine scenarios where people get pressed on a deadline and sacrifice this due diligence. The issue becomes using these tools without verifying their output — which of course, takes time.

So I followed up with a question of my own: Is there a relationship between AI dependency and imposterism, where the tool meant to help actually undermines the development of internal knowledge that would resolve the imposter feelings in the first place?

This kicked off a lively discussion around how far we outsource our thinking. There was a sense that we may not be there just yet, but it could become reality.

What would be the impact of this?

We turn up to meetings thinking we understand a topic because we’ve read the AI summary, but it’s flawed because it contains errors, omissions or fabrications. Unfortunately we cannot pick those up because we’ve lost basic comprehension to make sense of what is real and true. Then, when we get questioned by others, we either answer wrong confidently, or we cannot answer at all. It’s no longer a question of being found out as inadequate — we’ve become the very imposters we feared the most!

It reminded me of another conversation I had this week with Gary. On a Linkedin post that claimed the competitive moat humans have now is that AI agents don’t have agency, he shared his thinking around comprehension debt. This is the insidious, slow erosion of organisational comprehension — the gap between how complex your situation is and how well anyone in your organisation understands it. When AI tools are used well, this isn’t an issue, but when they are used as a replacement for human judgement it racks up fast — yet nobody is aware of it. It’s like muscle wastage when you don’t work out for a few weeks: you don’t notice it day to day until you go back to the gym and realise you can’t lift what you used to.

And this is the future that Altman et al is bringing to us. One where we become dependent, deskilled and disposable. If you think I’m exaggerating then we only have to look at the news headlines to see companies laying off large chunks of their workforce, citing AI as the cause — just this week Meta announced a 20% cut. What we have also seen, though, is that companies making these cuts are later living to regret it. Notably Klarna cut 700 workers, then rehired them back after their customer service degraded and people complained.

This shows us how the reality distortion field works. Altman says 'AI will do X', so we start behaving as if it already does. We offload comprehension and let skills atrophy. His prediction becomes self-fulfilling not because it was inevitable, but because we made it so.

So, what do we do?

The small group painting AI’s future as unstoppable progress are doing exactly what Jobs did: willing their preferred outcome into existence through sheer force of belief and capital.

The difference is, we are wise to this, and we don’t have to accept their version of reality.

If you don’t like this painted future, then you can choose your own. Together as consumers we have collective power to decline and push back on what we don’t want. There is no doubt that AI may serve a purpose as an assistive tool, but I do not believe it is a full replacement for thinking. Instead, we need to continue investing in human thought and communication and connection, in deliberately embracing thinking that is difficult or stressful in order to retain our capacity for it, in practising exercising judgement over what we consume and who we listen to. These are the skills that will make us irreplaceable in the long run, and we must use them — or lose them.

What future are you choosing to build?

If you're tired of investing in personal and professional development that doesn't stick, I work with women leaders in tech and space through executive coaching and speaking engagements.